Troubleshoot

The `Single.usdc` file references an external USD sublayer that is missing:

To make the USD file work correctly, the following structure is needed:

├── Single.usdc

└── obj/

└── Fern_v1/

└── Out_Growth.usd

To make sure USD Stage, action Requires:

- Place `obj` folder in the same directory as `Single.usdc`

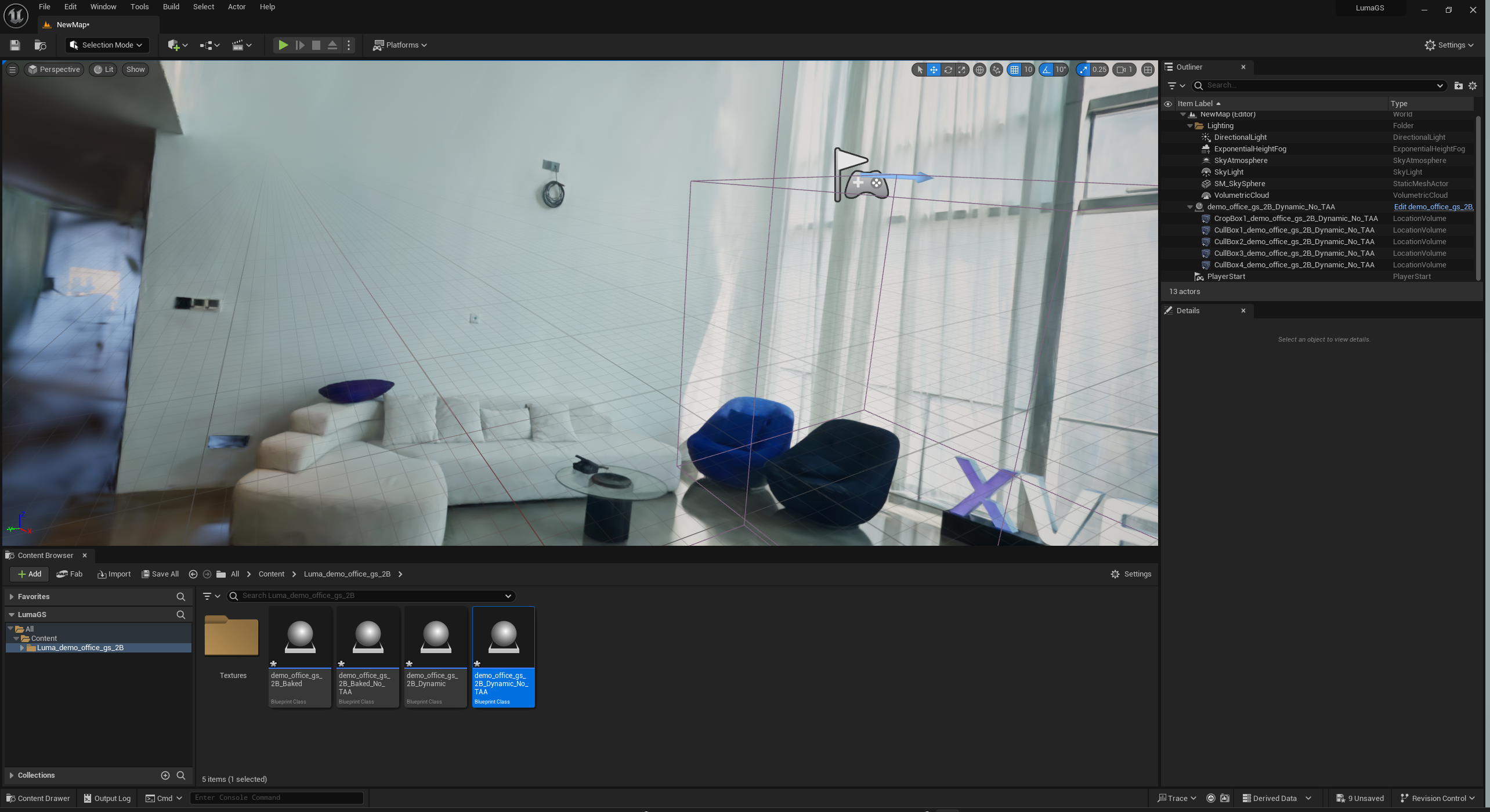

Render Test

USD GeoCache in Sequencer

Pay Attention:

1. Edits in default level sequence will lose if restart Unreal Engine, should create a new level sequence for .usdc and setup geometry cache before render.

2. Material will reset if restart Unreal as well, try to find an approach to override USD Stage by blueprint.

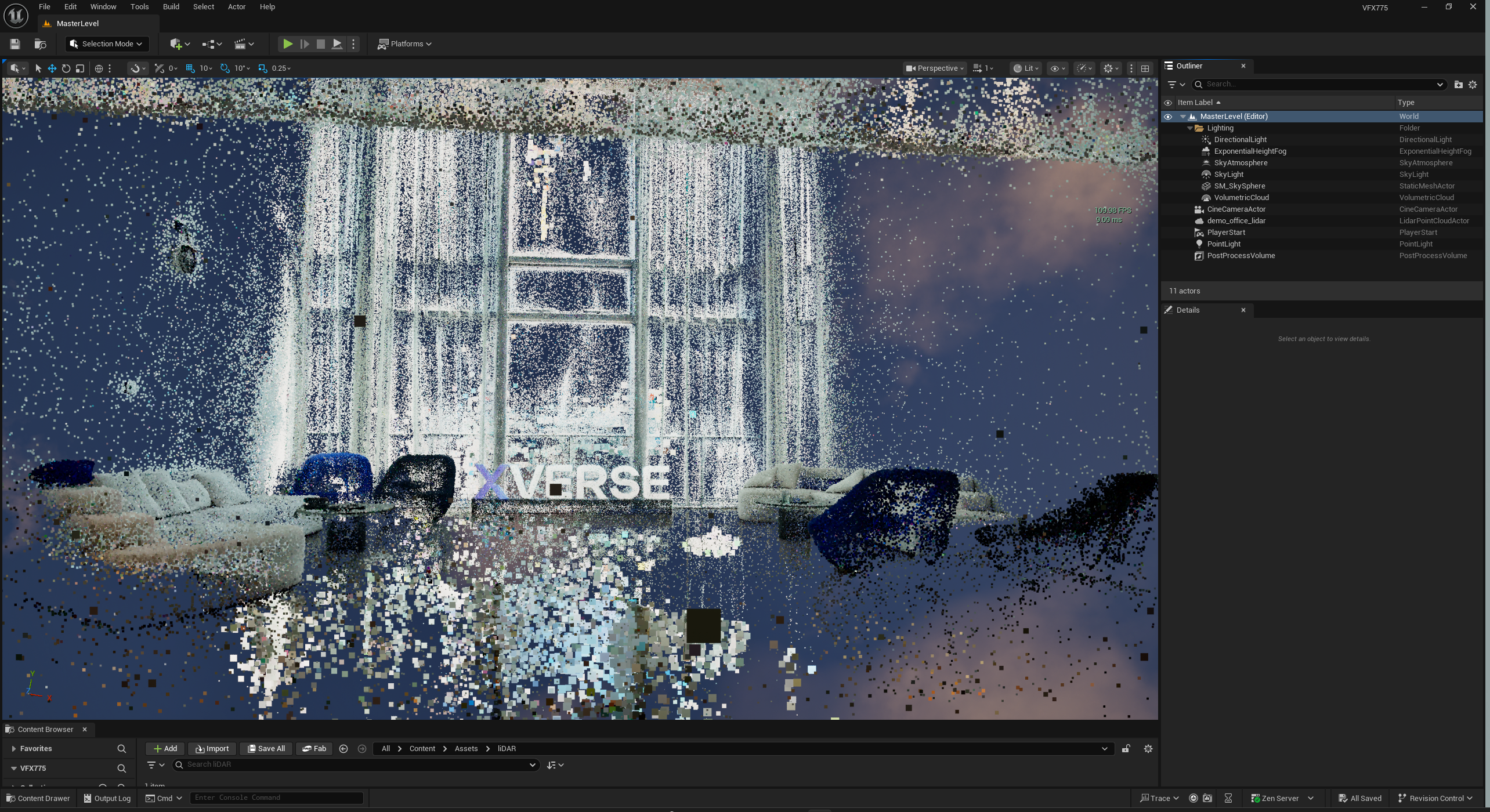

3. Some frames have strange artifacts.